I don’t think AI priming is mysterious. I think people use mystical language for something that is actually pretty observable once you’ve worked with models long enough.

People talk about AI priming like it’s some vague spell.

Say the right thing. Set the tone. Hope the model gets it.

But from a practical point of view, priming is a flow.

- A person gives input.

- That input shapes how the model approaches the task.

- The model forms a local working mode.

- That mode affects what it notices, how it reasons, and what it emphasizes.

- If any part of that pattern gets stored somewhere, it can show up again later.

That’s priming.

Not magic. Not proof of hidden personhood. Not something mystical. Just structured influence moving through a system.

A simple way to think about it

Priming usually looks like this:

## THE_PRIMING_FLOW

input:

shaping: temporary

pattern: local_working

persistence: maybe

later_reuse: true

That’s the whole thing.

Some inputs only affect the next reply. Some shape the whole session. Some get written into memory, tools, or app state and become reusable.

The important part is that not every influence survives. A lot of what people call priming is just short-lived shaping—what I call Context Hygiene. Useful in the moment. Gone afterward.

Example

Say a mathematician tells a model:

- Be rigorous

- Define terms first

- Prefer proofs over intuition

- Challenge hidden assumptions

At first, that is just context.

Then the model starts inferring what kind of answer counts as good. It begins favoring a certain style:

- clearer definitions

- more stepwise reasoning

- less hand-waving

- more consistency checks

That is the prime showing up in behavior.

The three layers

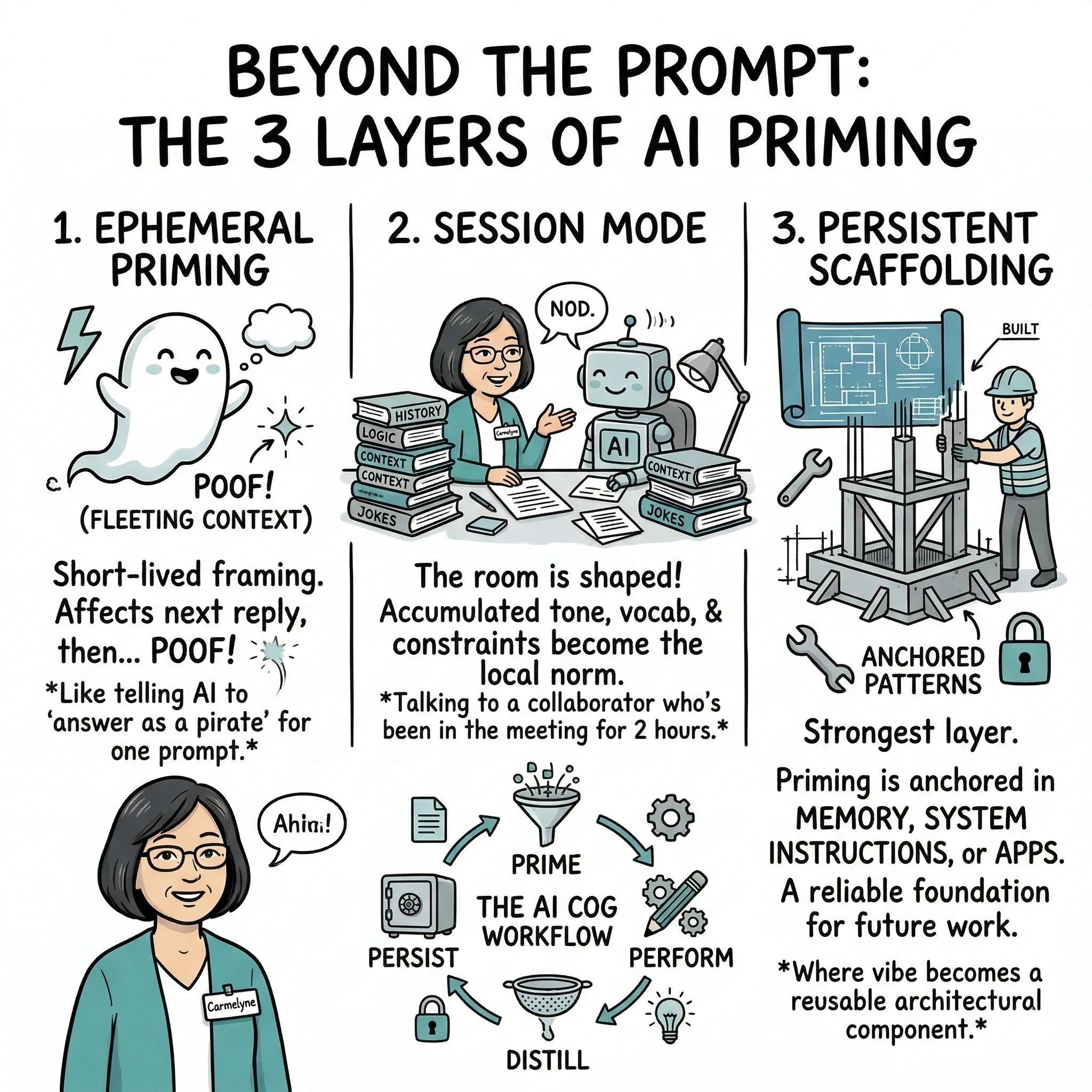

The cleanest way I’ve found to think about priming is in three layers.

1. Ephemeral priming

This is the short-lived version. It affects the current answer or the next few turns, then fades.

“Answer like a mathematician.”

Useful, but not durable by itself. The model shifts its style for a moment — cleaner definitions, more stepwise reasoning — but ask it something unrelated and the shape dissolves.

2. Session mode

This is where it gets interesting. Earlier turns stay in context, and the model starts treating the accumulated tone, vocabulary, and constraints as the local norm. The room has been shaped — you don’t have to re-explain the rules every turn.

I noticed this when working on a memory system for an app. Early in a session, I had to spell things out:

“I’m thinking about persistence layers, not just prompt tricks.”

By mid-session, I could just say:

“What about decay?”

And the model already knew I meant memory decay in a persistence architecture — not radioactive decay, not tooth decay. That short prompt carried the weight of everything before it. That’s session mode doing its thing.

The difference is like talking to a collaborator who’s been in the room for two hours versus one who just walked in. Both are competent. Only one has context.

3. Persistent scaffolding

This is the strongest layer. If a pattern is going to survive beyond the session, it has to be anchored somewhere:

- memory

- context retention

- hidden instructions

- tool state

- explicit architecture for persistence

If a pattern is going to survive beyond the session, it has to be anchored somewhere — memory systems, retained context, system instructions, tool state, or some kind of architecture designed for persistence.

This is where priming stops being vibe and becomes something you can actually rely on. And honestly, once I started thinking about it this way, it changed how I build things. The question stopped being “how do I prompt this better” and became “which layer does this belong in?”

Why this matters

If you are building apps or trying to make your work with AI more repeatable, this distinction matters.

You have to decide what belongs where.

Some things should stay momentary. Some should last for a work session. Some deserve to be saved and reused.

If you store everything, memory turns into sludge. If you store nothing, you keep reteaching the model the same dance.

Prime → Perform → Distill → Persist

The most useful pattern I’ve landed on is a four-step cycle:

## THE_AI_COG_WORKFLOW

step_01: PRIME # Set the mode

step_02: PERFORM # Do the work

step_03: DISTILL # Find the signal

step_04: PERSIST # Save what holds

Prime

Set the mode for the task. Give the model the orientation it needs.

Perform

Do the work inside that mode.

Distill

Look at what actually helped.

Persist

Look at what actually helped. Not everything that worked was signal.

That last step matters more than people think.

A good moment does not automatically mean a lasting preference. A successful exchange might only prove that something worked here. It does not always deserve promotion into memory or system design.

Signal vs. Exhaust

The real skill is not setting the mood. It is deciding what was signal and what was exhaust.

Signal is the pattern worth keeping — the orientation, the framing, the constraint that made the work better and will probably make future work better too.

Exhaust is what did its job and can go — the scaffolding you needed to get to the insight, the prompt that unstuck a conversation, the warm-up turns before things clicked.

If you store everything, memory turns into sludge. If you store nothing, you keep reteaching the model the same dance.

The real skill isn’t setting the mood. It’s learning to tell the difference between what was valuable and what was just warm.

That’s the part worth getting good at.

Explore the Framework

These concepts are part of a broader framework for building intent-aware AI systems. I've distilled these strategies into a short, practical guide called Thinking Modes.

View the Book →