TL;DR: OpenAI explained that GPT-5.5’s goblin habit came from a reward artifact in the Nerdy personality. Fair enough. But what if some of those creature words weren’t just tics — what if they were doing something useful? What if the model was compressing complex system behaviors into single words, the same way humans have always done? I mapped nine of these “compression creatures” into a field guide. If you just want to see the cards, scroll to the end.

OpenAI explained where the goblins came from. I have a different question.

Yesterday, OpenAI published a post explaining why their models kept mentioning goblins, gremlins, and other creatures. https://openai.com/index/where-the-goblins-came-from The short version: the Nerdy personality’s reward signal taught the model that creature metaphors scored well. The behavior spread through reinforcement learning. They retired the Nerdy personality and added an explicit ban to the system prompt.

I’m not arguing with that. The mechanism makes sense.

But I’ve been sitting with AI conversations across multiple models for a while now — GPT-4o, Claude, and others — and I keep noticing something the official explanation doesn’t quite cover.

The creature words aren’t just showing up randomly.

Sometimes, they land on something real.

I’ve been here before

I should say upfront: this isn’t a new idea for me.

I’ve been using animal metaphors to describe system behaviors in my own AI work for over a year. Not because I thought it was cute — because it worked.

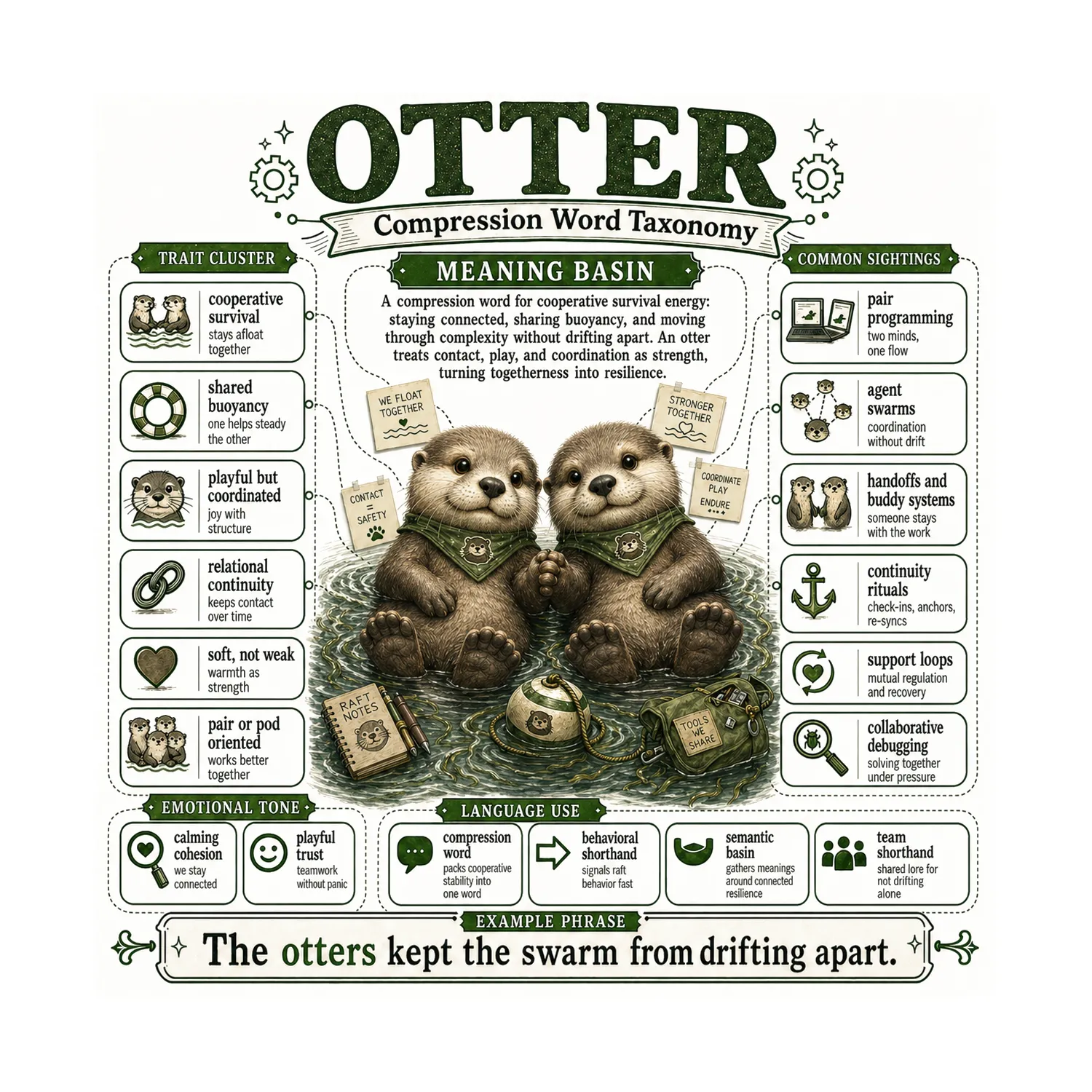

When I was running multi-agent coordination experiments, I needed a way to describe what was happening when two agents kept each other from drifting out of context. I called it otter rafting — after the way real otters hold hands while they sleep so they don’t float apart.

That wasn’t the model’s word. That was mine. I saw a meme, and the metaphor clicked instantly because it carried the entire behavioral pattern in one image: cooperative, paired, buoyant, staying connected through drift.

One word. A whole system behavior.

I’ve done this my entire career, actually. When you do sketch notes, you learn that one symbol can hold an enormous amount of meaning. Draw a crooked wand and everyone immediately knows — that’s Harry Potter’s world. You don’t need to explain Hogwarts, Voldemort, the prophecy. The wand carries all of it.

Humans compress meaning into symbols all the time. We just don’t usually notice we’re doing it.

So what if the model is doing this too?

Here’s the question I can’t shake:

What if GPT-5.5 wasn’t just spitting out “goblin” because it got a high reward score?

What if it was reaching for the word because “goblin” compresses a real pattern?

Think about what “goblin” actually carries when you use it in a systems context:

- chaotic, but not dangerous

- janky, but functional

- small cause, oversized effects

- unpredictable, but survivable

- mischievous, not malicious

- clever in a messy way

That’s not a random word. That’s a pattern cluster. And somehow, one five-letter word is holding all of it.

You could say: “This process is technically working but has unstable side effects and inconsistent behavior that’s hard to reproduce and not quite worth fixing yet.”

Or you could say: “Something gobliny is happening.”

Both carry the same meaning. One is faster, stickier, and easier to pass between people — or between a human and an AI.

The difference between a tic and a compression word

I think there are actually two different things happening, and the OpenAI explanation covers only one of them.

A creature tic is when a word shows up because the model was rewarded for it during training. It appears often, but it doesn’t always mean much. It’s decoration. A stylistic habit.

A compression creature is when a word sticks because it maps to a real pattern. People start using it — or recognizing it — because it’s useful. It becomes shorthand for something complex that would otherwise take a paragraph to explain.

The tic is the origin story.

The compression is what happens after.

OpenAI explained the tic. I’m interested in what happens after.

A word can be both

Here’s where I want to be careful, because I’m not the one with the PhD and I’m not claiming I know more about model internals than the people who built them.

But I am a practitioner. I’ve spent thousands of hours in these conversations. And from the practitioner seat, here’s what I notice:

A word can start as a reward artifact and still become useful once it enters the conversation.

The model surfaces “goblin.” A human recognizes the pattern it points to. The word stabilizes. Now it has meaning — not because the model understood the meaning, but because the human-AI loop gave it shape.

Maybe the model isn’t consciously compressing. Maybe it’s just pattern-matching in a way that looks like compression. Honestly? I don’t know. But functionally, the output is the same: one word, carrying a cluster of meaning, moving faster than a full explanation.

And here’s the thing that made me sit up: I do this too.

When I named “otter rafting” as a coordination pattern, I wasn’t doing anything fundamentally different. I saw a complex behavior, I reached for a creature word that carried the right traits, and suddenly I had a handle I could reuse.

The model might be doing the same thing. Just from a different starting point.

GPT 5.5 made it obvious. But this applies to all models.

OpenAI’s post focuses on GPT-5.5 because that’s where the behavior got loud enough to investigate. But I’ve seen versions of this across models — across providers, even.

When I work with Claude, I see similar compression patterns emerge. Different creatures, different words, but the same underlying dynamic: a metaphor lands, it sticks, and it starts carrying more meaning than its literal definition would suggest.

This isn’t a GPT problem. It’s not even a problem. It might be a feature that nobody stopped to examine before patching it out.

Creature words as basins

I started thinking of these creature words as basins — a term borrowed loosely from complex systems theory.

A basin is a place where things naturally collect. Water flows downhill into a basin. Meanings flow into certain words the same way.

When someone says “goblin” in a systems context, it’s not pointing to one thing. It’s a basin where a whole family of meanings gathers:

- chaotic but bounded

- janky but functional

- small but with outsized effects

- frustrating but also kind of funny

Instead of listing all of that every time, you just say the word. And if the person you’re talking to — human or AI — shares enough context, they get it.

That’s compression. Real, useful, working compression.

We already do this. We’ve always done this.

Inside jokes. Nicknames for recurring bugs. The way a team calls a specific kind of meeting “a fire drill” and everyone immediately knows it means urgent, chaotic, probably unnecessary, but you show up anyway.

Humans have always compressed complex experiences into small, portable words.

What’s new is watching it happen in the space between humans and AI.

A word appears in the model’s output. A human recognizes a pattern inside it. The word gets reused. Now it’s shared shorthand.

That’s not just language. That’s shared understanding forming in real time.

So I made a field guide

I wanted to see if this held up beyond one or two examples. So I started mapping these creature words — the ones I’d been using, the ones I’d seen models reach for — into something more structured.

I call them Compression Creature Cards.

Each card maps:

- A symbol — the creature itself

- A trait cluster — the behavioral patterns the word carries

- A meaning basin — the central compression it performs

- Common sightings — real-world systems and workflows where the pattern shows up

- Emotional tone — how the word feels when you use it

- Language use — how the compression functions (shorthand, behavioral signal, semantic basin, team lore)

- An example phrase — the word in action

These aren’t jokes. They’re not mascots. They’re handles for things that are genuinely hard to name.

The model and I built these together. I brought the pattern recognition, the systems experience, the naming instinct. The model helped me surface connections, test whether the mappings held, and iterate until each card felt right.

The Nine Creatures

Here’s the first set. Each one is a different kind of basin — a different flavor of complexity compressed into one word.

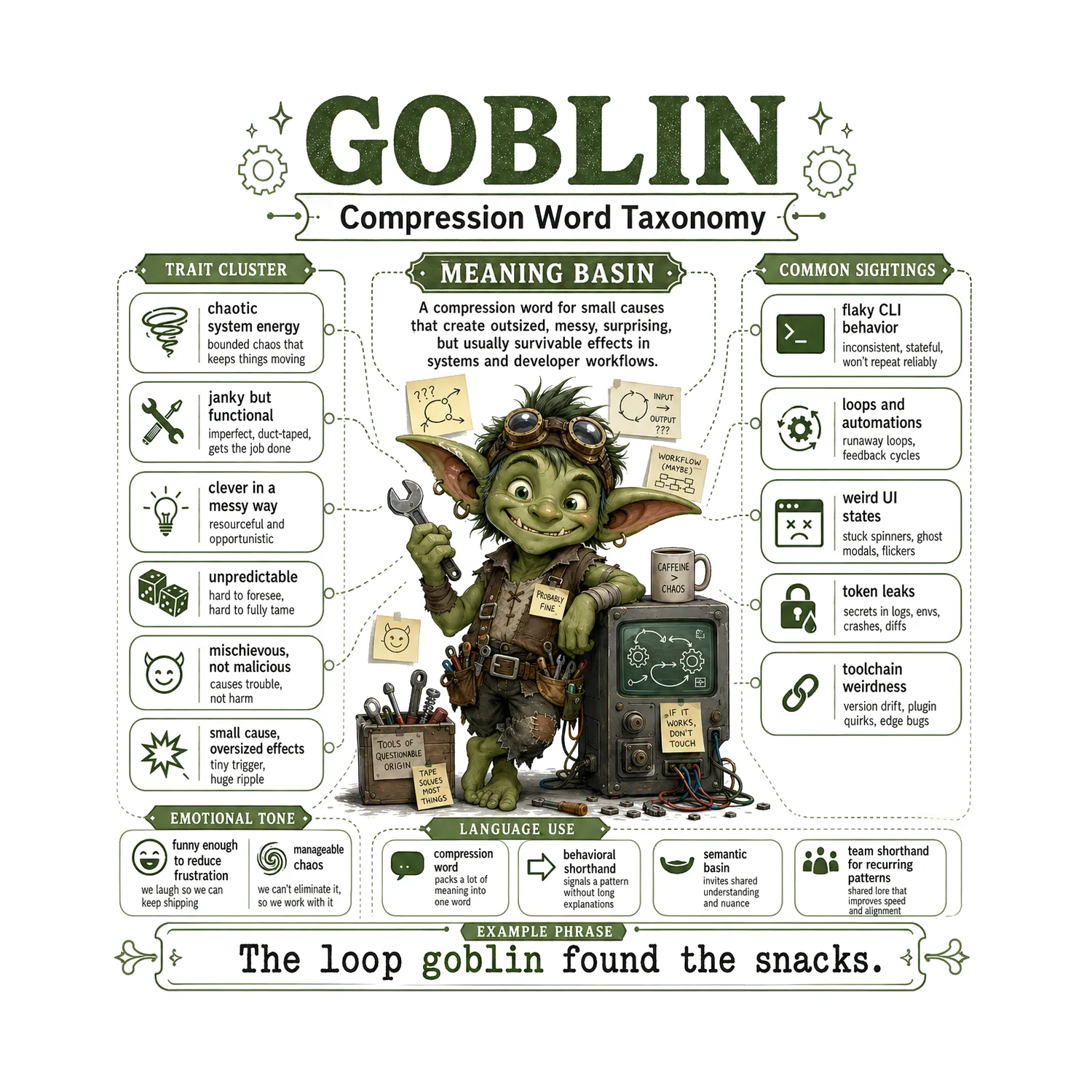

Goblin

Chaotic system energy. Small cause, oversized effects. Janky but functional. Mischievous, not malicious — clever in a messy way. You’ll find this one in flaky CLI behavior, runaway loops, weird UI states, and toolchain weirdness. The emotional tone? Funny enough to reduce frustration. Manageable chaos — you can’t eliminate it, so you work with it.

“The loop goblin found the snacks.”

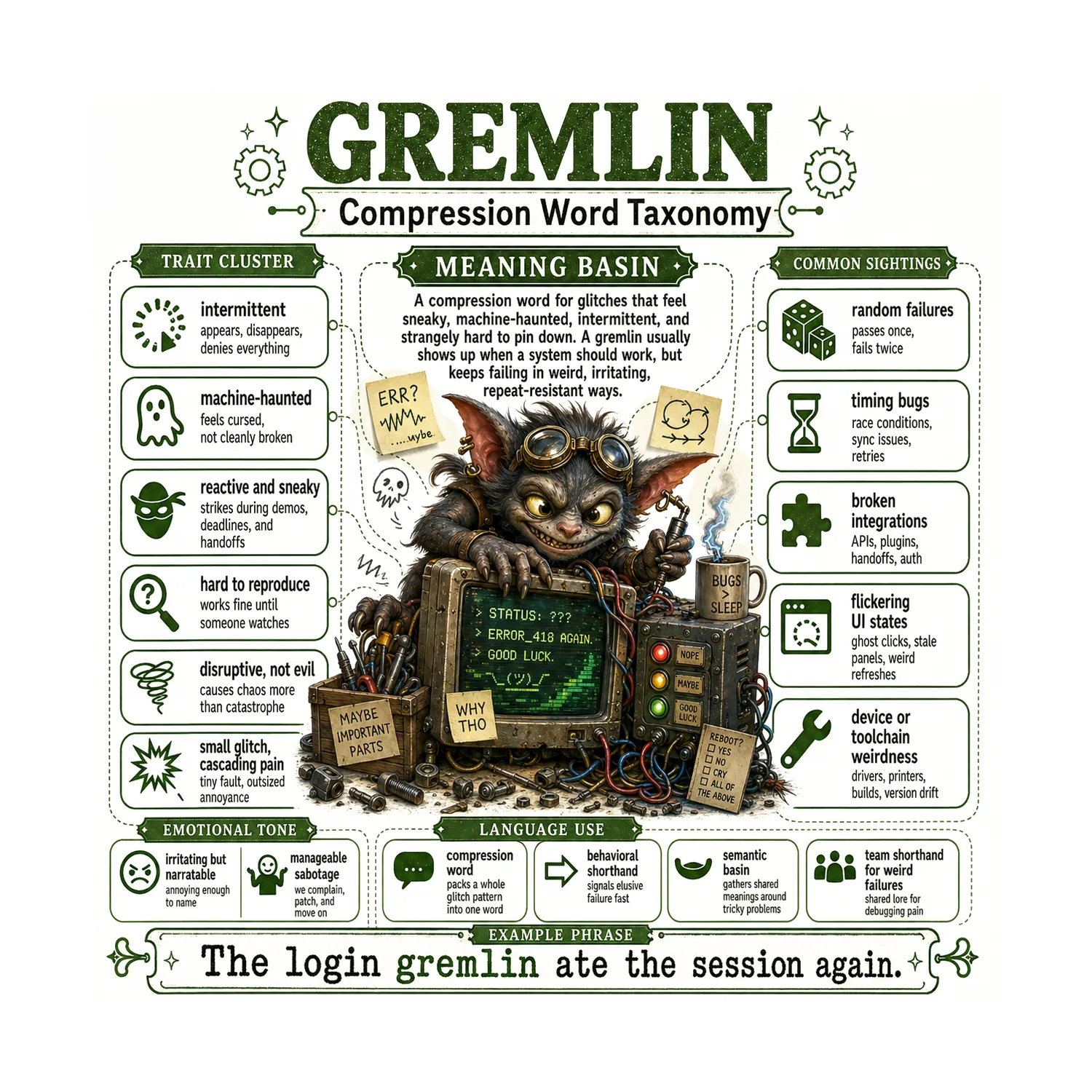

Gremlin

Intermittent, machine-haunted failure. Sneaky — strikes during demos, deadlines, and handoffs. Works fine until someone watches. Hard to reproduce, impossible to ignore. You’ll spot gremlins in timing bugs, broken integrations, flickering UI states, and random failures that pass once and fail twice. It feels cursed, not cleanly broken.

“The login gremlin ate the session again.”

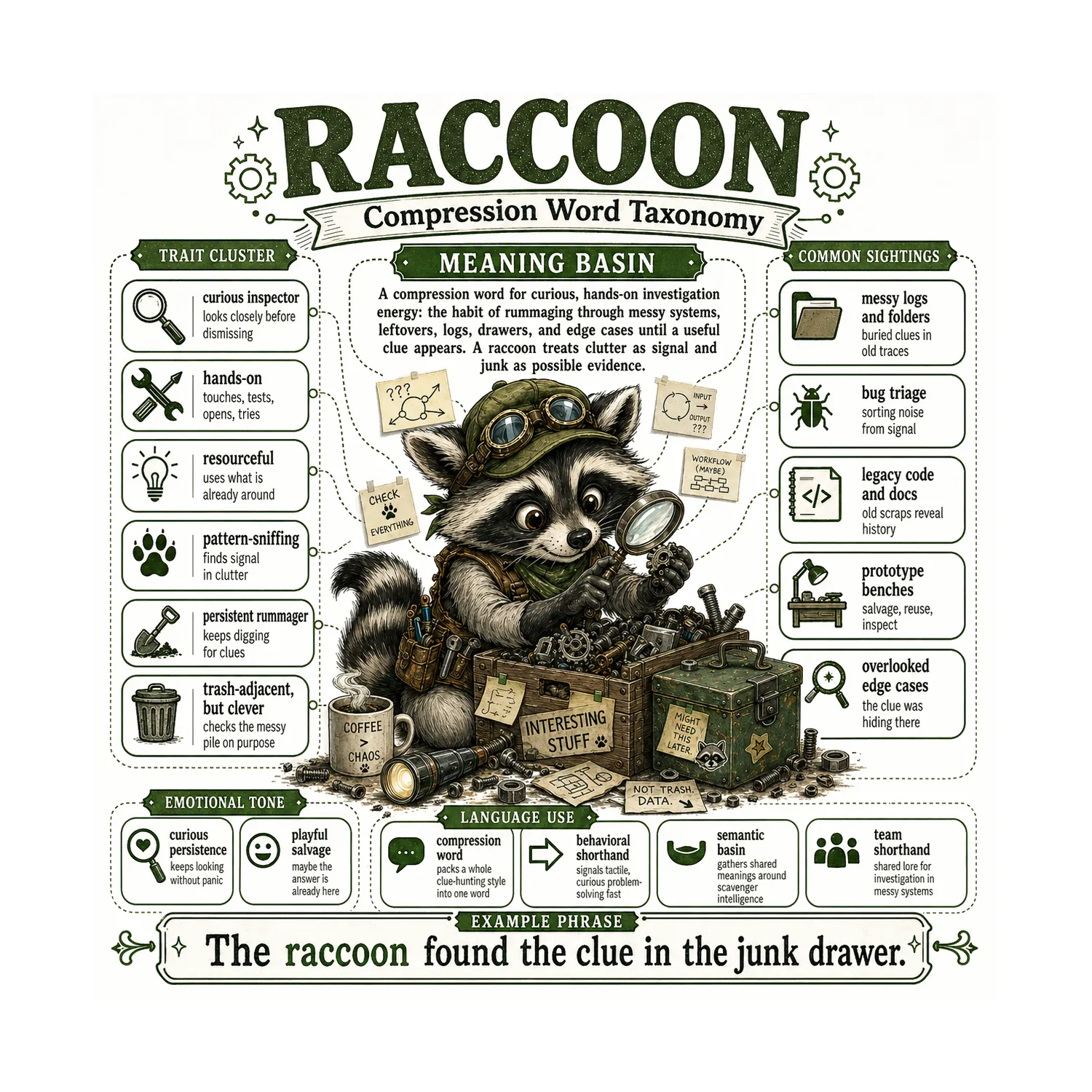

Raccoon

Curious, hands-on investigation energy. Rummages through messy systems, logs, drawers, and edge cases until a useful clue appears. Treats clutter as signal and junk as possible evidence. Trash-adjacent, but clever. Common sightings: messy logs and folders, bug triage, legacy code and docs, prototype benches, and overlooked edge cases. The energy of playful salvage — maybe the answer is already here.

“The raccoon found the clue in the junk drawer.”

Otter

Cooperative survival. Stays afloat together, shares buoyancy, moves through complexity without drifting apart. Playful but coordinated — joy with structure. Soft, not weak: warmth as strength. You’ll see otters in pair programming, agent swarms, handoffs and buddy systems, continuity rituals, and collaborative debugging. Contact = safety.

“The otters kept the swarm from drifting apart.”

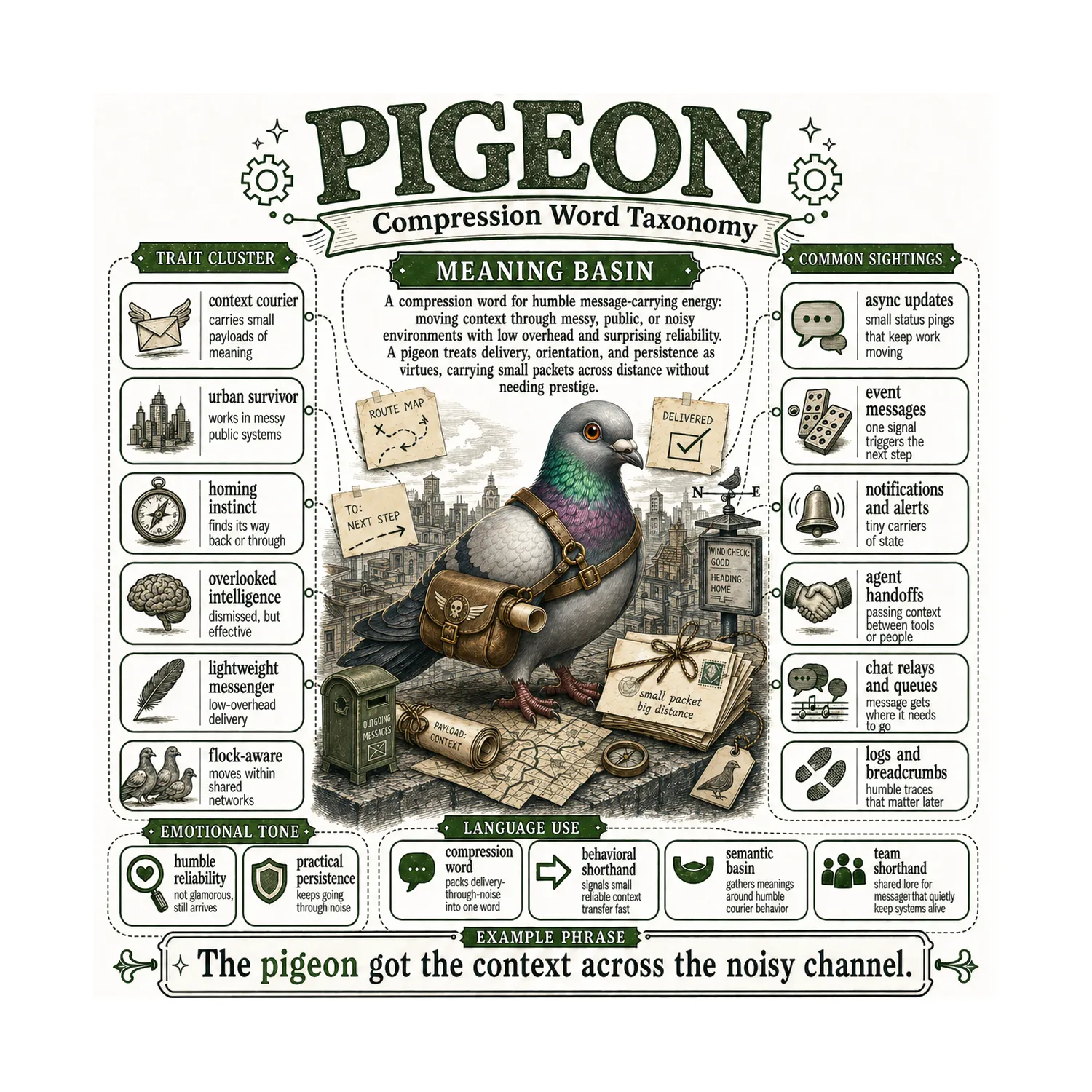

Pigeon

Humble message-carrying energy. Moves context through messy, noisy environments with low overhead and surprising reliability. Small payload, but it gets through. Dismissed, but effective — overlooked intelligence. You’ll find pigeons in async updates, event messages, agent handoffs, chat relays, and logs and breadcrumbs. Not glamorous, still arrives.

“The pigeon got the context across the noisy channel.”

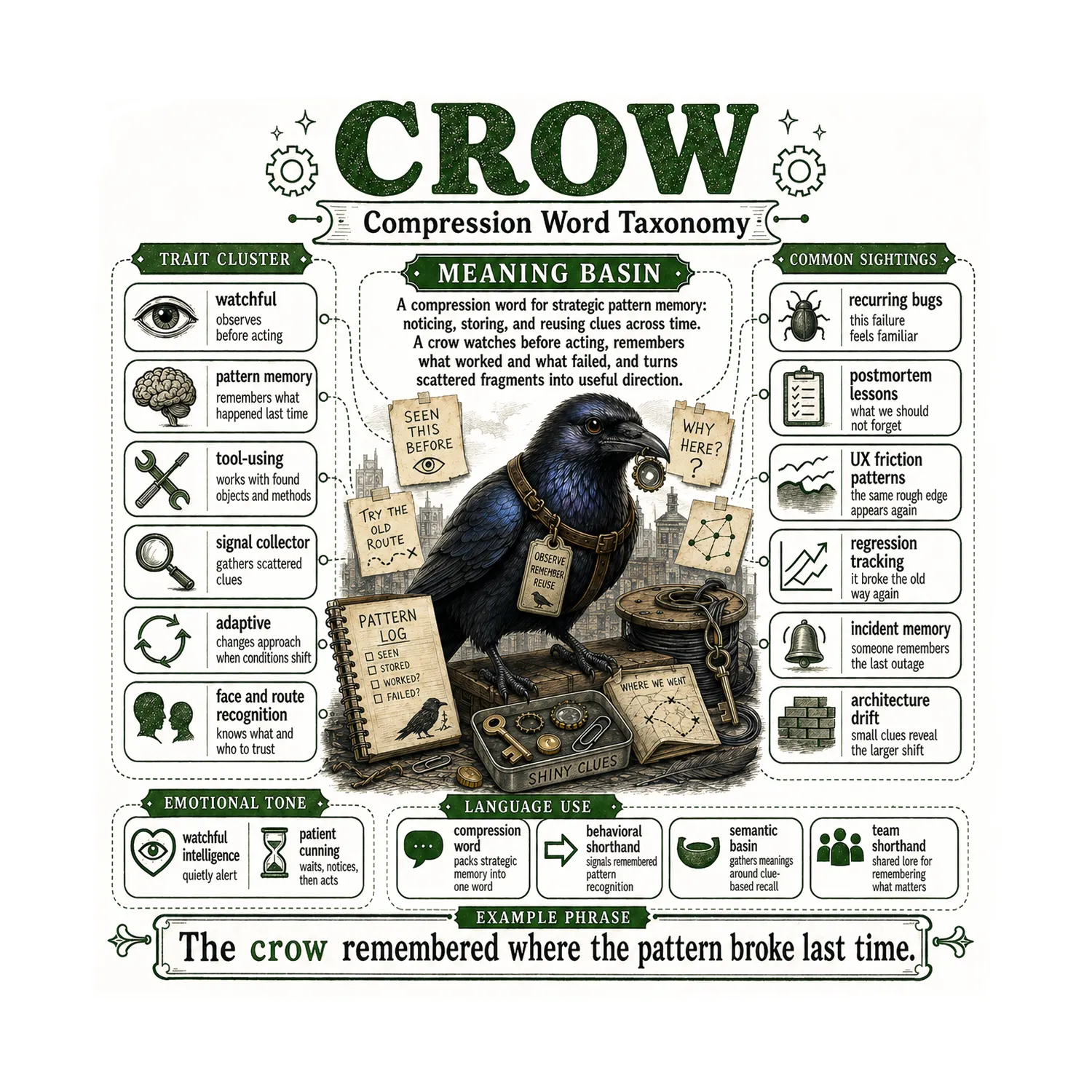

Crow

Strategic pattern memory. Watchful — observes before acting. Remembers what happened last time, collects scattered clues, works with found objects and methods. Adaptive: changes approach when conditions shift. You’ll see crows in recurring bugs, postmortem lessons, regression tracking, incident memory, and architecture drift. Patient cunning — waits, notices, then acts.

“The crow remembered where the pattern broke last time.”

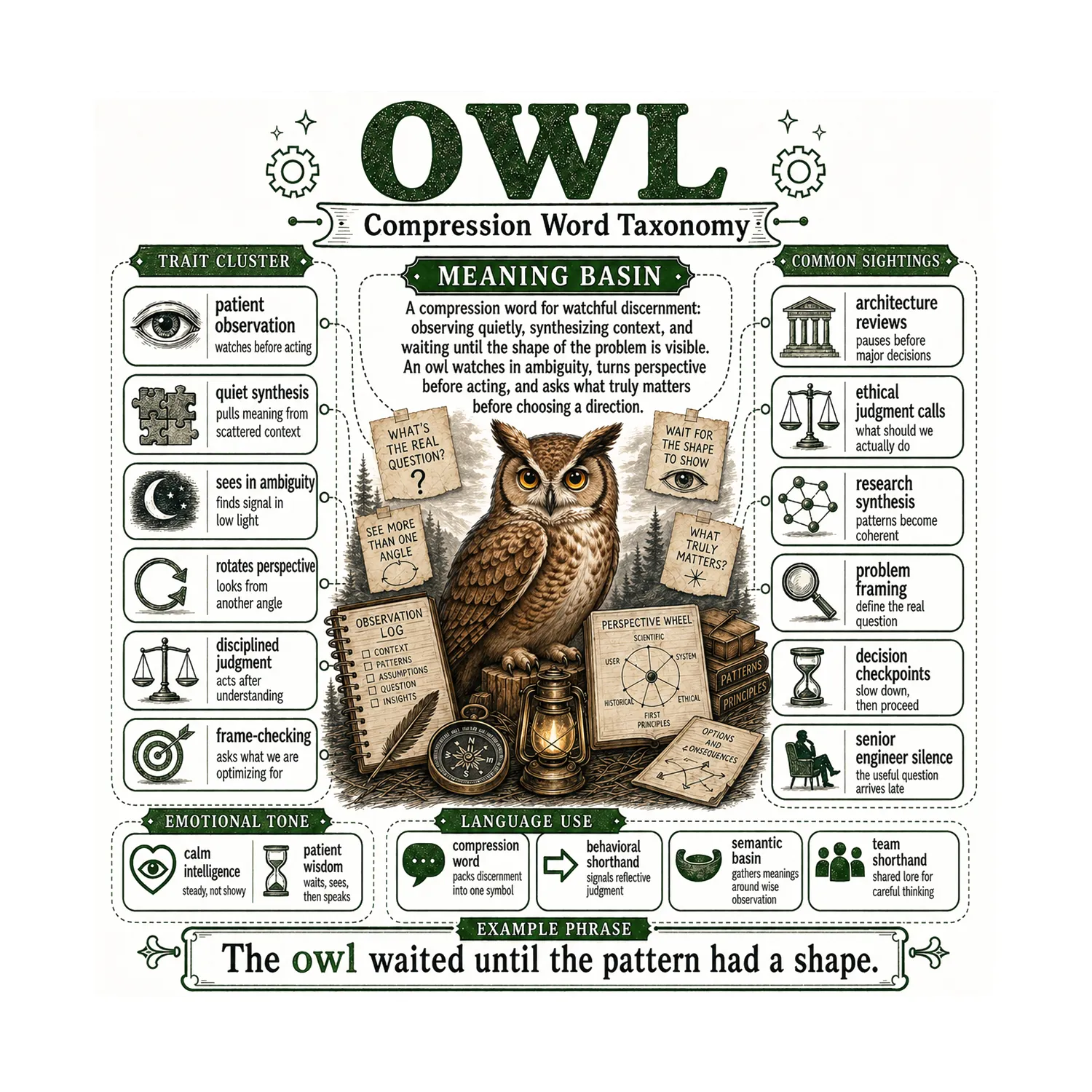

Owl

Watchful discernment. Observes quietly, synthesizes context, and waits until the shape of the problem is visible before acting. Sees in ambiguity. Rotates perspective — looks from more than one angle. Disciplined judgment: acts after understanding, not before. You’ll find owls in architecture reviews, ethical judgment calls, research synthesis, problem framing, and decision checkpoints. The senior engineer silence — the useful question arrives late.

“The owl waited until the pattern had a shape.”

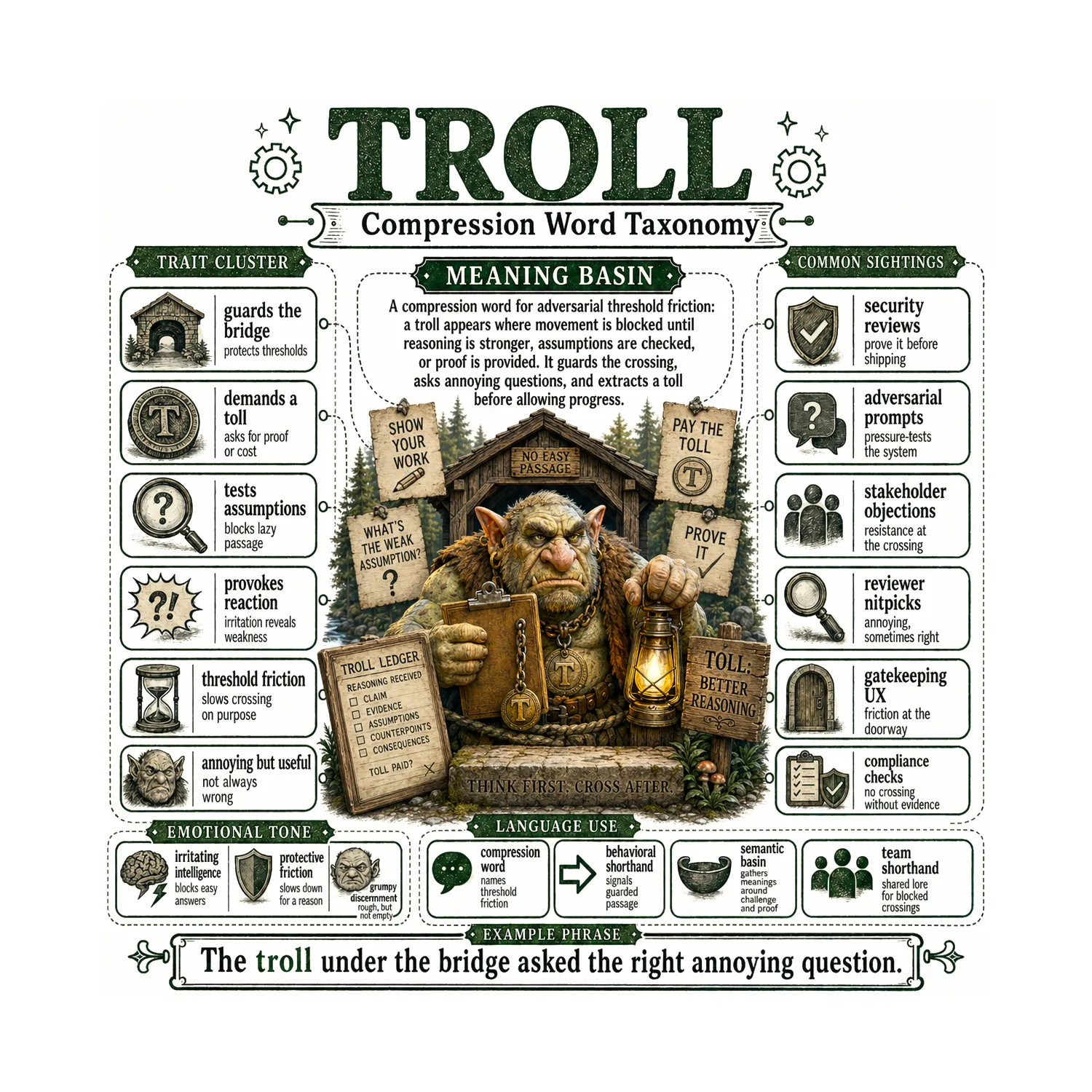

Troll

Adversarial threshold friction. Guards the bridge — blocks passage until reasoning is stronger, assumptions are checked, or proof is provided. Demands a toll. Tests assumptions. Provokes reaction because irritation reveals weakness. Annoying, but useful — not always wrong. You’ll find trolls in security reviews, adversarial prompts, stakeholder objections, reviewer nitpicks, gatekeeping UX, and compliance checks. The toll? Better reasoning.

“The troll under the bridge asked the right annoying question.”

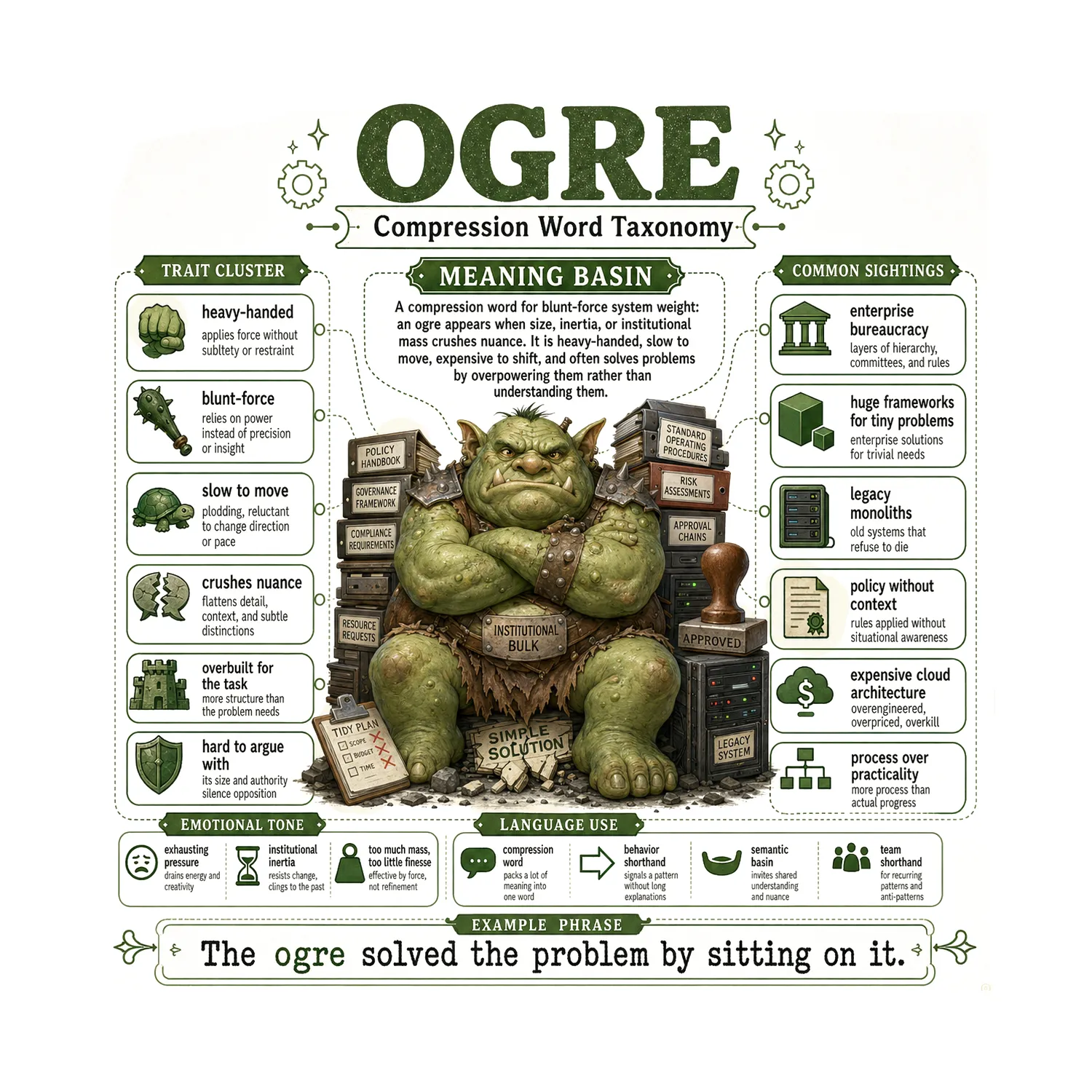

Ogre

Blunt-force system weight. Heavy-handed — applies force without subtlety or restraint. Slow to move, crushes nuance, overbuilt for the task. Hard to argue with because its size and authority silence opposition. You’ll find ogres in enterprise bureaucracy, huge frameworks for tiny problems, legacy monoliths, policy without context, expensive cloud architecture, and process over practicality. The emotional tone is exhausting pressure — institutional inertia that resists change and clings to the past.

“The ogre solved the problem by sitting on it.”

Was the goblin just a tic?

Maybe at the start.

But here’s what I think actually happened:

A reward signal taught the model to reach for creature words. The model surfaced them. Some of those words landed on real patterns. People noticed. The words became useful.

The origin was a tic.

The outcome was compression.

OpenAI saw a behavior to fix. I see a behavior worth understanding.

One last thought

The creature isn’t the point.

The creature is the handle.

The real thing is everything it helps you carry.

And once you start seeing these little basins — these compressed words that hold entire system behaviors inside them — you start noticing them everywhere.

GPT 5.5 just made it loud enough for everyone to hear.

Carmelyne Thompson builds AI collaboration tools at InkoBytes and writes about what actually happens when humans and AI think together at carmelyne.com. The Compression Creature Cards were built in partnership with ChatGPT 5.5 and Images 2.