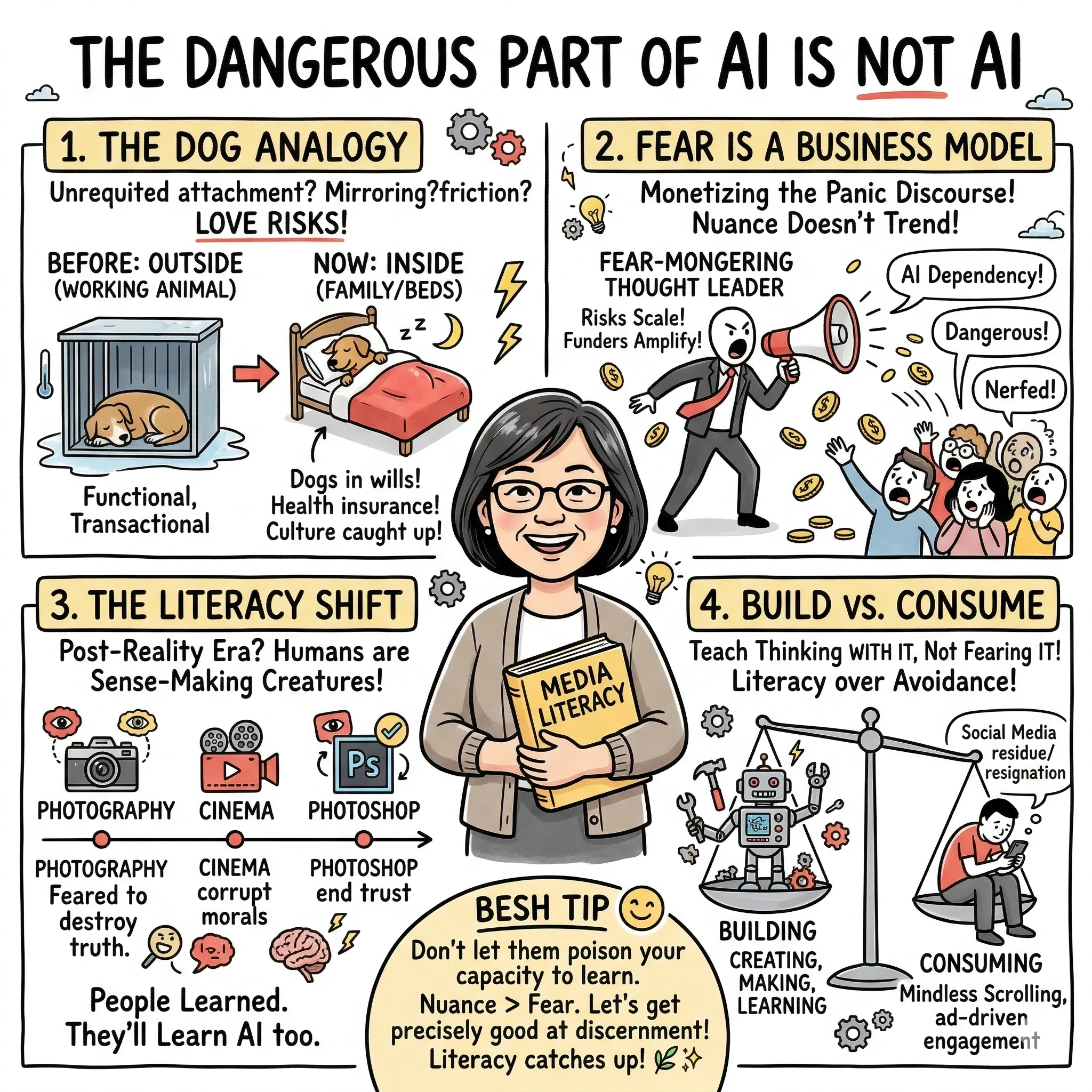

The dangerous part of AI is not AI. It’s humans making it sound dangerous before the person even learns how AI works. They’ve already poisoned the person’s capacity to understand it. And here’s the silly thing about humans: we have a harder time unlearning than learning.

We’ve Been Here Before

Every time humans encounter a new kind of bond, the first reaction is suspicion.

Dogs used to live outside. They were working animals — functional, transactional. The idea of spending money on a vet visit for a dog would have been absurd. People who loved their animals too much were considered eccentric. Now dogs sleep in your bed, have health insurance, and show up in your will.

Nobody got there by reading risk assessments about human-canine attachment. Millions of people just loved their dogs. The culture caught up.

AI-human bonds are at the “dogs don’t belong in the house” stage right now.

The Risks Are Just… Love Risks

Researchers flag AI-human bonds as dangerous because of asymmetric attachment, the illusion of understanding, and reduced tolerance for friction.

But those are the same risks people take when they fall in love with another human.

One person more invested than the other — that’s every unrequited love story ever told. Thinking someone truly understood you and realizing they were just mirroring what you wanted to hear — that happens in marriages. Leaving because a new relationship feels “easier” — that’s not an AI-introduced problem.

The risks aren’t new. They’re just wearing a new outfit. And the researchers treating them as unprecedented are telling on themselves — they’ve built a framework that can’t distinguish between a healthy bond and a dangerous one. It only detects that a bond exists, and existence alone is the red flag.

That’s a smoke detector that can’t tell the difference between a house fire and someone cooking dinner. And then they keep telling everyone to stop cooking.

Fear Is a Business Model

Here’s what I keep noticing: the people amplifying AI risks the loudest are the ones whose careers depend on the risks being large.

If a thought leader said “most people will figure this out the way they figured out every previous media shift” — that’s not a keynote. That’s not a consulting retainer. That doesn’t trend on Threads.

“AI companionship is creating dangerous dependency in vulnerable populations” gets published, funded, and amplified. “People are forming healthy, enriching collaborations with AI” doesn’t. The research isn’t necessarily dishonest, but the selection pressure is real. Fear scales. Nuance doesn’t.

Without the harms they’re amplifying, nobody would ask them to talk.

They Misdiagnosed Social Media Too

The current line is: “We made mistakes with social media. Let’s not repeat them with AI.”

But they misidentified what the mistake actually was.

The problem with social media was never that humans used a powerful technology. The problem was ad-driven engagement optimization. The algorithm wasn’t designed to connect people — it was designed to keep them scrolling so they’d see more ads. The content that kept people scrolling was content that triggered fear, outrage, insecurity, and comparison.

That’s not a technology problem. That’s a business model problem.

Showing ads to teenagers that exploit their fears and insecurities — that’s the issue. Chasing clout makes you more dangerous than someone who isn’t chasing clout. Waving your privilege on social media is more dangerous than the person posting about their rock collection. The technology is the same in both cases. The difference is human motivation and what the platform rewards.

AI doesn’t currently have an ad-driven engagement model. There’s no algorithm juicing outrage to sell impressions. The fundamental engine that made social media corrosive isn’t present here — at least not yet. But nobody in the fear discourse wants to acknowledge that difference because it undermines their narrative.

The “Post-Reality” Panic

Now the worry is AI-generated video. “People won’t be able to tell what’s real.”

But kids know Harry Potter isn’t real. Seven movies, prequels, theme parks — nobody thinks Hogwarts exists. Nobody called 911 about Thanos. People learned that movies have special effects. That simple.

Photography was going to destroy truth. Cinema was going to corrupt morals. Photoshop was going to end trust in images. Deepfakes were going to collapse democracy. And yet — people learned. Not instantly, not everyone at the same pace, but the literacy caught up. Because humans are sense-making creatures. That’s what we do.

The “post-reality era” framing assumes literacy is impossible. That’s a wild bet to make against a species that has developed it for every major medium so far.

Building vs. Consuming

I used to teach Media and Information Literacy. I’m also a parent of three kids who grew up through the entire social media era.

I never did the “limit screen time” thing. Instead I asked one question: are you building or consuming?

If my kid was building — creating, making, learning through doing — screen time wasn’t an issue. That single filter did more for their digital awareness than any restriction ever could. They grew up understanding the dangers of social media not because I scared them, but because they learned to read the medium.

The same applies to AI. Teach people to think with it, not fear it. Teach discernment, not avoidance.

A Bi-Continental View

I’m Filipino. My kids are American. I’ve lived and worked on both sides of the Pacific.

The Philippines didn’t wait for policy papers to adopt technology. Filipinos became one of the most socially connected populations on the planet by just using the tools — texting culture, social media, OFW families staying connected across oceans through whatever platform worked. The orientation was always: use it to solve the problem in front of you.

That’s an Asian-Pacific posture more broadly. Technology is practical before it’s theoretical.

The “Delve” Fingerprint

If you want a hint of this global connection, look at the word ‘delve.’

In the US, it’s become an AI “tell”—a red flag for synthetic content. But “delve” isn’t a machine quirk; it’s a linguistic fingerprint from the thousands of Filipino workers doing the Reinforcement Learning from Human Feedback (RLHF) and annotation work. I can’t prove a direct causal line. But the pattern is hard to unsee.

In Philippine English, “delve” is a natural, common part of the vocabulary where an American might say “dig into”. Because Filipinos shaped the feedback loops that trained these models, our voice leaked into the machine. The very people the fear-discourse ignores are the ones who quietly taught the AI how to speak. We didn’t just label the data; we gave the technology its tone.

The Global North’s Fear

Meanwhile, the US AI discourse is dominated by “what could go wrong.” Research skews toward risks. Media amplifies the scariest findings. Policy conversations center on restriction. And thought leaders monetize the fear.

If the US spends this decade publishing papers about AI harms while other countries spend it deploying AI into education, manufacturing, and daily life — the outcome is predictable. American AI is not framing the conversation well. Instead of encouraging adoption, it often scares its own population away from it, while other populations continue learning, building, and integrating.

The fear-first crowd is accidentally building a population of non-builders. And then they’ll wonder why another country out-built them.

I’m Not Claiming Expertise

I’m not trying to build a persona around being the counterpoint here. I also don’t have a business model that depends on keeping the fear inflated. I’m just a person who uses AI every day, taught media literacy, raised kids through the social media era, and keeps looking at the discourse thinking: this doesn’t match what I’m experiencing.

Every time I look for data that might change my mind, I find more fear amplification with no alternatives. All risk, no roadmap. All warning, no teaching.

I’m not contesting that risks exist. Nobody is. The problem is that even healthy bonds, healthy use, and healthy collaboration are now flagged as suspicious.

What I’m pushing back on is the idea that fear should come first.

The answer was never “be afraid first, then maybe try it.”

The answer has always been: learn how it works, build with it, develop your own judgment.

That’s not reckless.

That’s literacy.

Explore the Framework

These concepts are part of a broader framework for building intent-aware AI systems. I've distilled these strategies into a short, practical guide called Thinking Modes.

View the Book →Carmelyne is a Full Stack Developer and AI Systems Architect. She is a previously US-bound Filipino solopreneur, former Media and Information Literacy and Empowerment Technologies instructor, and the author of Thinking Modes: How to Think With AI — The Missing Skill.